What is preprocessing scale

The preprocessing.scale() algorithm puts your data on one scale. This is helpful with largely sparse datasets. In simple words, your data is vastly spread out. For example the values of X maybe like so: X = [1, 4, 400, 10000, 100000]

What is feature scaling in data preprocessing?

Feature scaling is a method used to normalize the range of independent variables or features of data. In data processing, it is also known as data normalization and is generally performed during the data preprocessing step.

What is StandardScaler in machine learning?

In Machine Learning, StandardScaler is used to resize the distribution of values so that the mean of the observed values is 0 and the standard deviation is 1.

Why is StandardScaler used?

StandardScaler : It transforms the data in such a manner that it has mean as 0 and standard deviation as 1. In short, it standardizes the data. Standardization is useful for data which has negative values. It arranges the data in a standard normal distribution.What is standard scaling?

Standardization is another scaling technique where the values are centered around the mean with a unit standard deviation. This means that the mean of the attribute becomes zero and the resultant distribution has a unit standard deviation.

Why is scaling important?

Why is scaling important? Scaling, which is not as painful as it sounds, is a way to maintain a cleaner mouth and prevent future plaque build-up. Though it’s not anyone’s favorite past-time to go to the dentist to have this procedure performed, it will help you maintain a healthy mouth for longer.

What is scaling the data?

Scaling. This means that you’re transforming your data so that it fits within a specific scale, like 0-100 or 0-1. You want to scale data when you’re using methods based on measures of how far apart data points, like support vector machines, or SVM or k-nearest neighbors, or KNN.

What is scaler transform?

The idea behind StandardScaler is that it will transform your data such that its distribution will have a mean value 0 and standard deviation of 1. In case of multivariate data, this is done feature-wise (in other words independently for each column of the data).Should I use MinMaxScaler or StandardScaler?

StandardScaler is useful for the features that follow a Normal distribution. This is clearly illustrated in the image below (source). MinMaxScaler may be used when the upper and lower boundaries are well known from domain knowledge (e.g. pixel intensities that go from 0 to 255 in the RGB color range).

What is the difference between MinMaxScaler and StandardScaler?StandardScaler follows Standard Normal Distribution (SND). Therefore, it makes mean = 0 and scales the data to unit variance. MinMaxScaler scales all the data features in the range [0, 1] or else in the range [-1, 1] if there are negative values in the dataset. … This range is also called an Interquartile range.

Article first time published onWhat is the difference between fit and Fit_transform?

In summary, fit performs the training, transform changes the data in the pipeline in order to pass it on to the next stage in the pipeline, and fit_transform does both the fitting and the transforming in one possibly optimized step. fit computes the mean and std to be used for later scaling.

Why do we use MIN MAX scaler?

Transform features by scaling each feature to a given range. This estimator scales and translates each feature individually such that it is in the given range on the training set, e.g. between zero and one.

Why do we use Fit_transform?

fit_transform() is used on the training data so that we can scale the training data and also learn the scaling parameters of that data. … These learned parameters are then used to scale our test data.

What is preprocessing in Sklearn?

The sklearn. preprocessing package provides several common utility functions and transformer classes to change raw feature vectors into a representation that is more suitable for the downstream estimators. In general, learning algorithms benefit from standardization of the data set.

What is the difference between normalized scaling and standardized scaling?

S.NO.NormalizationStandardization8.It is a often called as Scaling NormalizationIt is a often called as Z-Score Normalization.

How do you normalize?

- Calculate the range of the data set. …

- Subtract the minimum x value from the value of this data point. …

- Insert these values into the formula and divide. …

- Repeat with additional data points.

What is scaling and its types?

Definition: Scaling technique is a method of placing respondents in continuation of gradual change in the pre-assigned values, symbols or numbers based on the features of a particular object as per the defined rules. All the scaling techniques are based on four pillars, i.e., order, description, distance and origin.

What are the types of scaling?

- Nominal Scale.

- Ordinal Scale.

- Interval Scale.

- Ratio Scale.

What is scale variable?

Essentially, a scale variable is a measurement variable — a variable that has a numeric value. … This could be an issue if you’ve assigned numbers to represent categories, so you should define each variable within the measurement area individually.

How often should scaling be done?

How frequently should scaling be done? Plaque formation on the teeth is a continuous process. If this is not removed by brushing it starts mineralizing into tartar within 10-14 hours. Such persons may require periodic scaling, every 6 months or so.

Is Scaling good for teeth?

Fact: Scaling removes tartar and keeps teeth and gums healthy. Scaling and deep cleaning of gums prevents bad breadth and bleeding gums. Thus, scaling is beneficial.

Does tooth scaling hurt?

Teeth scaling and root planing can cause some discomfort, so you’ll receive a topical or local anesthetic to numb your gums. You can expect some sensitivity after your treatment. Your gums might swell, and you might have minor bleeding, too.

Where is StandardScaler used?

Use StandardScaler if you want each feature to have zero-mean, unit standard-deviation. If you want more normally distributed data, and are okay with transforming your data.

What is Python StandardScaler?

StandardScaler removes the mean and scales each feature/variable to unit variance. This operation is performed feature-wise in an independent way. StandardScaler can be influenced by outliers (if they exist in the dataset) since it involves the estimation of the empirical mean and standard deviation of each feature.

Should I normalize or standardize data?

Normalization is useful when your data has varying scales and the algorithm you are using does not make assumptions about the distribution of your data, such as k-nearest neighbors and artificial neural networks. Standardization assumes that your data has a Gaussian (bell curve) distribution.

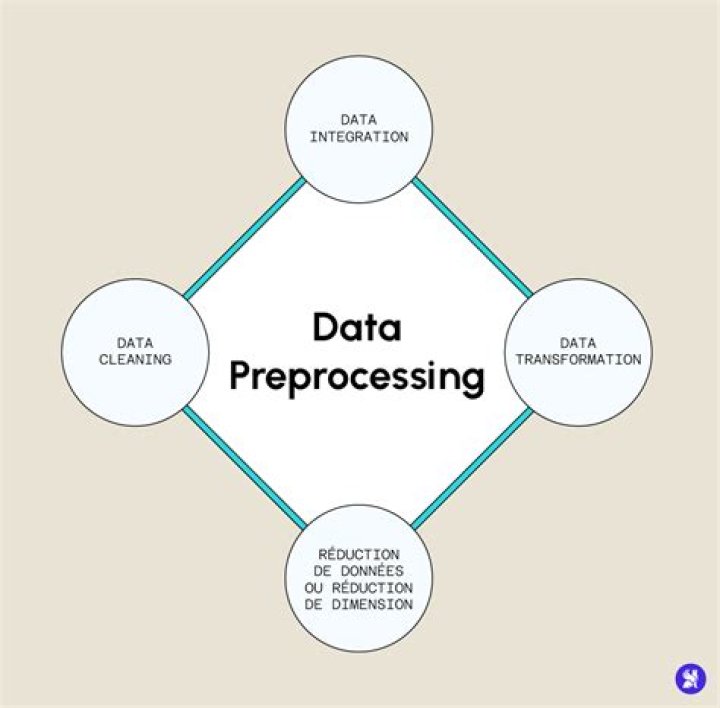

What is preprocessing in Python?

Pre-processing refers to the transformations applied to our data before feeding it to the algorithm. Data Preprocessing is a technique that is used to convert the raw data into a clean data set.

What is fit in Python?

fit() is implemented by every estimator and it accepts an input for the sample data ( X ) and for supervised models it also accepts an argument for labels (i.e. target data y ). Optionally, it can also accept additional sample properties such as weights etc. fit methods are usually responsible for numerous operations.

What is MIN-MAX scaling?

Rescaling (min-max normalization) Also known as min-max scaling or min-max normalization, is the simplest method and consists in rescaling the range of features to scale the range in [0, 1] or [−1, 1]. Selecting the target range depends on the nature of the data.

Does scaling affect outliers?

By scaling data according to the quantile range rather than the standard deviation, it reduces the range of your features while keeping the outliers in.

Is MinMaxScaler normalization?

MinMaxScaler for Normalization In case of normalizing the training and test data set, the MinMaxScaler estimator will fit on the training data set and the same estimator will be used to transform both training and the test data set.

What is difference between normalization and standardization?

Normalization typically means rescales the values into a range of [0,1]. Standardization typically means rescales data to have a mean of 0 and a standard deviation of 1 (unit variance).